It’s also clear that it’s spending a substantial amount of time in the Running state. However, the highlighted method call is the same in both views and it’s clear that something important and substantial is happening here - this call is spending a large amount of time blocked. In the first view Running state, only the first call can be discarded as it’s an active-polling loop.

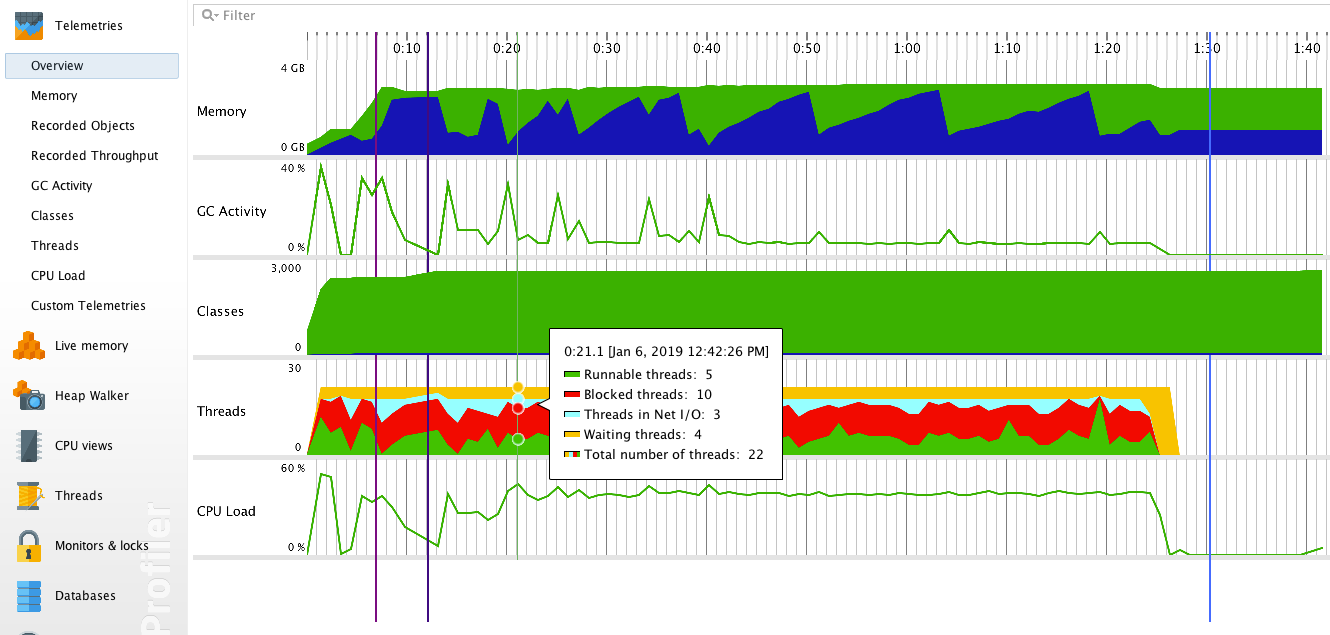

They are not actually doing anything and can be safely ignored. There are huge differences in runtimes here, but, what can we discard? The first four methods in the All States view are actually blocked threads just listening for requests. To exemplify the “running-versus-blocked” issue, here are the Hot Spots or top CPU runtimes, in the Running state for a test:Īnd these are the Hot Spots when All States are considered for the same test: This means that your tier may appear to perform less than it actually is compared to other components. Also, the performance penalties on profiled JVMs do not extend to dependencies outside the JVM. However, the runtime of the internal service may not be reliable depending on your development environment sizing, etc. If they are blocked, waiting on I/O or Socket activity, the view must be switched to “All States” for that time to be adequately reflected.

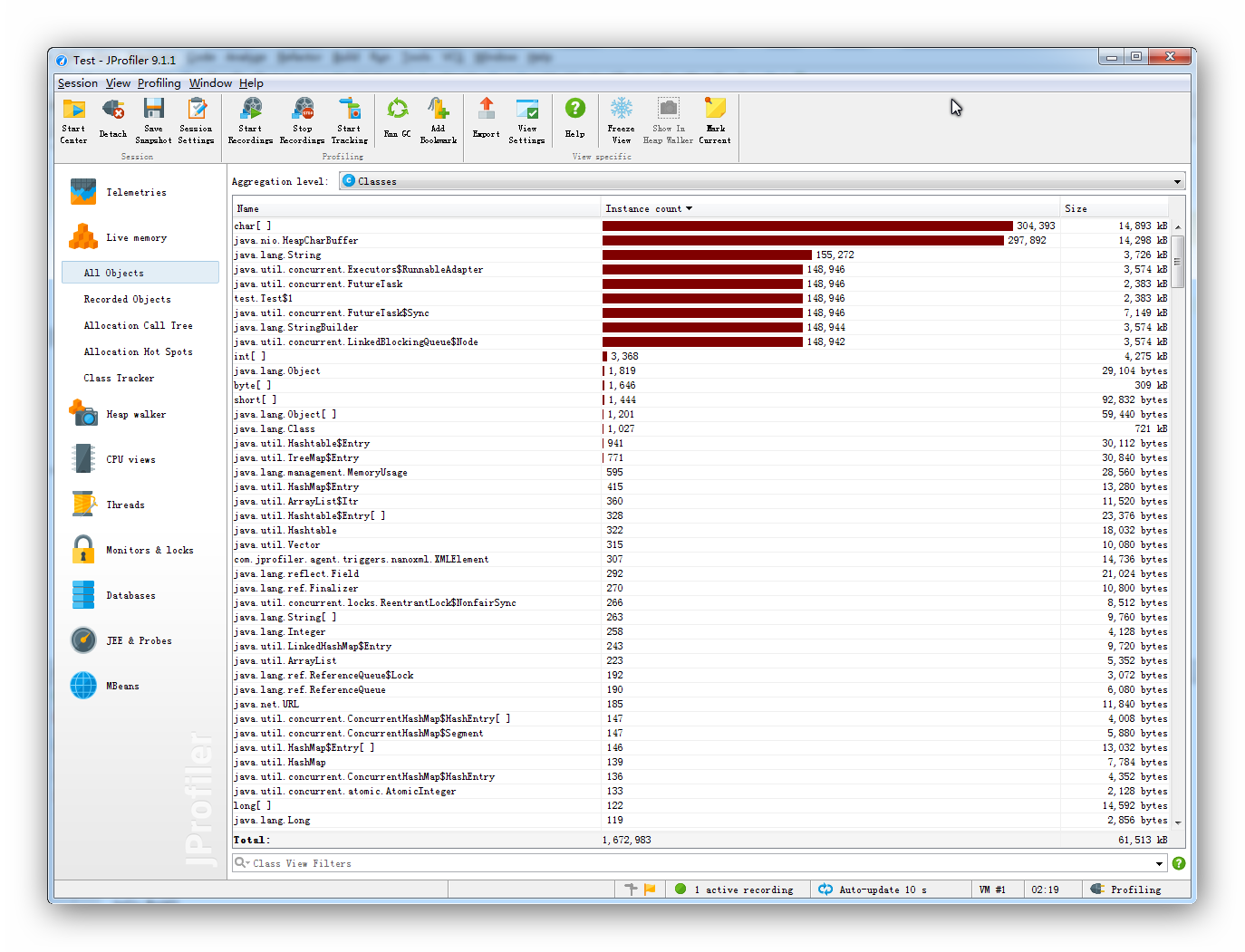

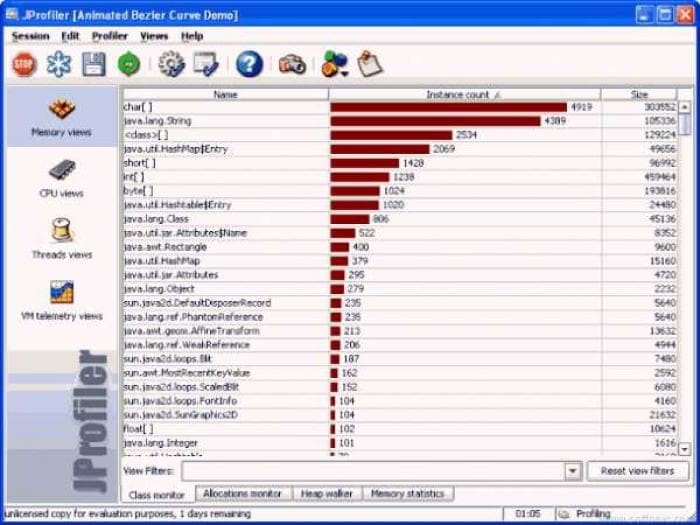

By default, JProfiler shows runtime for threads in the “Running” state. Further analysis confirmed that a service we depend on has a cache that was missed on the first invocation.ĭependent service runtime brings up a few more points. This data gives us a clue as to what the issue might be - something happens on the first call that gets cached or otherwise sped up in subsequent invocations. It’s clear from this data that out of the 20 calls to this method, one of them took far longer than the rest, which were all fairly comparable at 40 to 60 ms. The provided graph makes this more human-parsable. The line in blue at the top shows the method name (redacted), the total time in this method, the number of calls made to it, the average, median, minimum, maximum, standard deviation, and outlier coefficients. The method statistics report looks like this: We can also see how many times a particular method was called. Like JMeter, but at the method instead of the API level, we can see average, maximum, and minimum runtimes. The results are summed up for the whole call tree. “Profiled mode” works by adding instrumentation to selected classes, recording method invocations, how long they took, and what other methods were called. There are major advantages and drawbacks to both, and understanding the differences is a key element to gathering usable data. JProfiler has two major modes of analyzing CPU time: profiled and sampled.

It is primarily used to look for algorithmic issues. It should be readily apparent that profiled data cannot be used to establish or validate SLAs. Due to hardware constraints and our testing goals, 90 percent of my tests were single-threaded sequential requests, often made against the same datasets. We run our tests against a development environment, which means the performance of the services we depend on are unpredictable and certainly not reliable simulations of their production counterparts. That depends on how it’s used exactly, but more on that later. Therefore, it negatively impacts performance. We do not use JProfiler in our production environment because it does its magic by modifying the JVM and taking measurements from the runtime environment. It can also be used to identify memory issues, but we didn’t use it for that purpose in this particular exercise. JVM profiling by and large, aims to identify long-running or redundant method invocations. I just completed a long analysis of our V2 API using JProfiler for API performance testing and want to spend some time - ok, the lion’s share of this post - discussing what we learned from this analysis.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed